-

Life finds a way.

-

Finished reading: Palaver by Bryan Washington 📚

-

Ghost Pipe flower (not a fungus!) spotted while hiking near Asheville, NC. Thanks Beth and Jonathan for teaching me about this plant!

-

How to Hate AI • Steve Simkins

I read a blog post recently saying that it was ok to hate AI. While I agree to some extent, I think it was missing some key points about how to hate AI. It’s easy to just hate something you don’t like. We as humans have a natural propensity to hate or dislike things we are not familiar with. The problem is that this approach is akin to putting your head in the sand or screaming at the clouds; it doesn’t actually help anyone.

-

Nobody Pushed Back: Why Engineers Stay Silent Until It’s Too Late | How to Center a Div

Someone walks through an architectural decision and nobody in that room actually agrees — they just act like they do, because saying what you really think is socially expensive. Meeting ends, decision gets made. Six months later production blows up and everyone says “we knew this would happen.”

I wonder how AI is going to change this situation? Will it become harder to push back against something AI has built even if it’s bad? How long until engineers don’t even know what the AI is producing and the automated decisions being made are not even reviewed?

-

For 25 years, Google Search was built on a contract. The web provided the content – billions of pages, freely linked, freely crawled. In return, Google sent people back. The link was the unit of exchange. It’s what made the Web thrive as an information system: you publish, Google indexes, users click through, and value flows back to the source. Win-win.

That contract is now broken.

-

Free stuff unintentional (?) still life.

-

Some photos taken outside Taos, NM from last year that I don’t think ever made it to the blog.

-

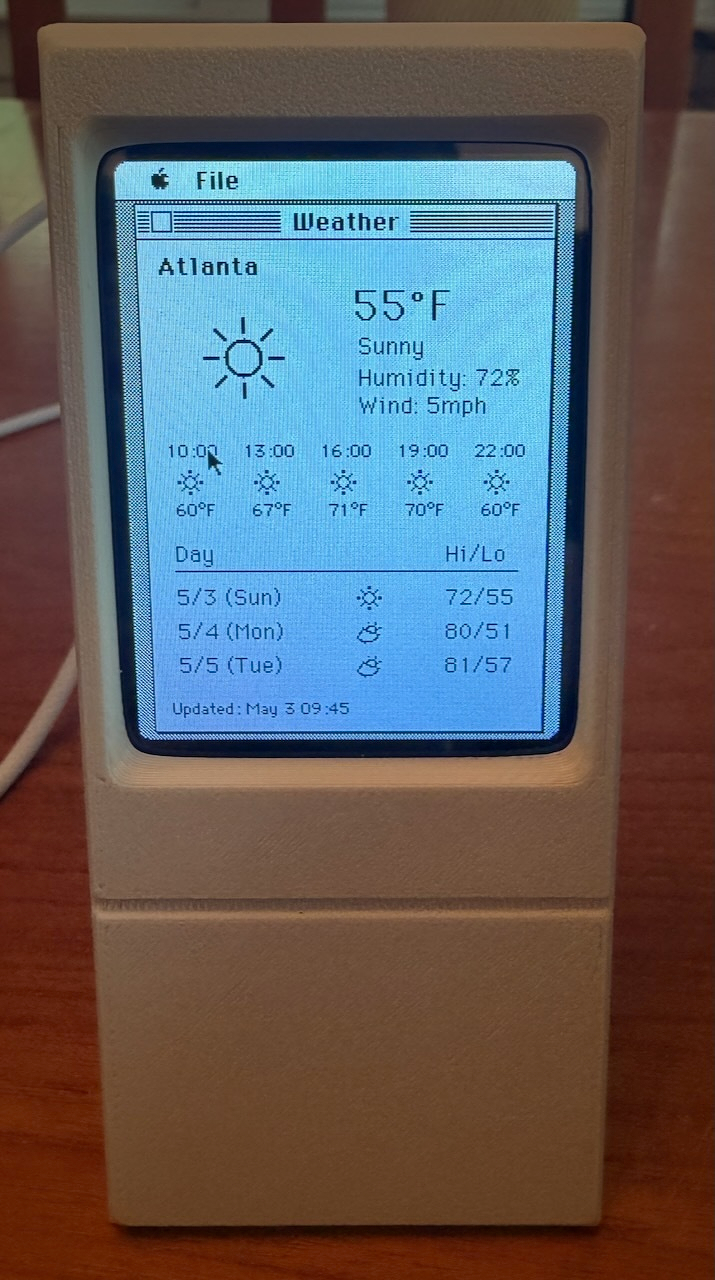

Cydintosh: Tiny classic Mac Plus emulator for displaying weather data

If you like classic Macs and home automation, you’ve got to check out the Cydintosh project:

https://github.com/likeablob/cydintosh

I assembled mine and it’s working great!

It’s amazing to me that this little board is emulating a Mac while listening to events for weather data. What a clever project!

If this appeals to you and you don’t have access to a 3d printer for the enclosure, reach out. I have plenty of PLA in this color to go around. :-)

-

BEWARE SOFTWARE BRAIN | The Verge

I’ve reviewed a lot of tech products over the past decade and a half, and all I can tell you is that it is a failure when you ask people to adapt to computers. Computers should adapt to people. Asking people to make themselves more legible to software — to turn themselves into a database — is a doomed idea.

Great essay by Nilay.

It feels to me like the whole industry has forgotten about the “personal” in personal computer. I’m someone who loves computers, or at least I used to. But I think it sounds awful to have to remold my entire life to suit the computer.

The irony here is that LLMs are the first technology that really could make the PC personal. But instead we are on a path to remake our lives to suit the LLM, not to mention our land, water, and electricity.

I hope as a society we can course correct. I hope, if LLMs have to be a permanent part of the computing landscape, small, efficient models that run on the hardware we already have take over. Then we might have some hope of making computers personal again.

-

-

Film Photography

A few friends convinced me that I should give film photography a try so I took the plunge!

The camera I chose is the Pentax K1000 which came with a 50mm f/2 lens. I wanted to find something that was completely manual/analog but still had a functional exposure meter and that’s exactly what the K1000 provides. With the exception of the meter, the camera is completely functional without a battery.

It’s been an adjustment to photograph with full manual controls, including manual focus which I almost never need when shooting digital.

My first roll* was Kodacolor 200 and I had a few photos that I was really happy with which I’ll share here.

*: My actual first roll was a b&w Kodak Tri-X and, sadly, the lab mistakenly destroyed it when they processed it as color. It’s a sad outcome, but I suppose all a part of the overall experience with film! Honestly, I was a little relieved that it was the lab’s mistake and not my own.

-

This barred owl lives in this magnolia tree right near our home!

-

Trump’s latest salvo to upend offshore wind: Pay firms… | Canary Media

In its efforts to block U.S. offshore wind development, the Trump administration has halted project construction, rolled back tax credits, and spread misinformation. Now, in the latest maneuver, the administration is paying a global energy giant nearly $1 billion to walk away from its plans to install turbines off the east coast. On Monday, the Interior Department said it had struck a deal with France’s TotalEnergies, which agreed to forfeit its leases for offshore wind areas near North Carolina and New York. In exchange, the Trump administration will “reimburse” the company dollar for dollar for the lease fees — and that money will be plowed into new fossil fuel projects.

Insane. Let me make sure I’m following this logic:

- Hype up fossil fuels while shitting on renewables

- Start war, spiking the price of oil

- Pay a foreign oil company to not finish projects that’d bring more clean energy into the US grid

- Also pay the same foreign oil company to build natural gas export infrastructure that locals oppose and also doesn’t contribute to add energy into the US grid

- Laugh all the way to the bank while oil companies (and Trump) get richer and the environment, US energy grid, and US citizens suffer the costs?

-

A portal in El Morro.

-

Finished reading: Gideon the Ninth by Tamsyn Muir 📚

-

Otra de Vieques

-

Adios desde Vieques

-

Hola desde Vieques

-

Phantom Obligation (Text Version) | Terry Godier

But when we applied that same visual language to RSS (the unread counts, the bold text for new items, the sense of a backlog accumulating) we imported the anxiety without the cause. Nobody is waiting.

Really good essay about the design of RSS readers. If this appeals to you, check out the app by this author that implements the ideas in this essay: Current.

In summer 2024, I started a hobby project to build a personal RSS reader and I had a similar approach to what is described here: no unread counts and building a feed like view of posts across all sources, organized in a way I like. I use it every day.

If I hadn’t built this, I’d probably be using Current as my daily rss reader.